- Welcome to AHXproject.

AHXproject

< | >

Recent posts

#51

Ubuntu News / Ubuntu 26.04 has a new boot a...

Last post by tim - Mar 23, 2026, 01:52 AMUbuntu 26.04 has a new boot animation (blink and you'll miss it)

Ubuntu 26.04 has a new boot spinner. The new animation is based on the Resolute Raccoon mascot and replaces the new one added in 25.10.

Ubuntu 26.04 has a new boot spinner. The new animation is based on the Resolute Raccoon mascot and replaces the new one added in 25.10.

You're reading Ubuntu 26.04 has a new boot animation (blink and you'll miss it) , a blog post from OMG! Ubuntu . Do not reproduce elsewhere without permission.

Categories: News, plymouth, Ubuntu 26.04 LTS

Source: https://www.omgubuntu.co.uk/2026/03/ubuntu-26-04-boot-spinner Mar 19, 2026, 07:25 PM

Ubuntu 26.04 has a new boot spinner. The new animation is based on the Resolute Raccoon mascot and replaces the new one added in 25.10.

Ubuntu 26.04 has a new boot spinner. The new animation is based on the Resolute Raccoon mascot and replaces the new one added in 25.10.You're reading Ubuntu 26.04 has a new boot animation (blink and you'll miss it) , a blog post from OMG! Ubuntu . Do not reproduce elsewhere without permission.

Categories: News, plymouth, Ubuntu 26.04 LTS

Source: https://www.omgubuntu.co.uk/2026/03/ubuntu-26-04-boot-spinner Mar 19, 2026, 07:25 PM

#52

Ubuntu News / GNOME 50 released – this is w...

Last post by tim - Mar 23, 2026, 01:52 AMGNOME 50 released – this is what's new

GNOME 50 is out. It enables VRR and fractional scaling by default, expands parental controls, and supports GPU-accelerated remote desktop – and more.

GNOME 50 is out. It enables VRR and fractional scaling by default, expands parental controls, and supports GPU-accelerated remote desktop – and more.

You're reading GNOME 50 released – this is what's new , a blog post from OMG! Ubuntu . Do not reproduce elsewhere without permission.

Categories: News, fractional scaling, GNOME 50, Papers, Ubuntu 26.04 LTS

Source: https://www.omgubuntu.co.uk/2026/03/gnome-50-released Mar 18, 2026, 06:40 PM

GNOME 50 is out. It enables VRR and fractional scaling by default, expands parental controls, and supports GPU-accelerated remote desktop – and more.

GNOME 50 is out. It enables VRR and fractional scaling by default, expands parental controls, and supports GPU-accelerated remote desktop – and more.You're reading GNOME 50 released – this is what's new , a blog post from OMG! Ubuntu . Do not reproduce elsewhere without permission.

Categories: News, fractional scaling, GNOME 50, Papers, Ubuntu 26.04 LTS

Source: https://www.omgubuntu.co.uk/2026/03/gnome-50-released Mar 18, 2026, 06:40 PM

#53

9to5Linux / System76 Launches New COSMIC-...

Last post by tim - Mar 18, 2026, 04:20 PMSystem76 Launches New COSMIC-Powered Thelio Mira High-Performance Linux PC

System76 launches the next-generation Thelio Mira Linux desktop computer with Pop!_OS Linux 24.04 LTS and the COSMIC desktop environment.

The post System76 Launches New COSMIC-Powered Thelio Mira High-Performance Linux PC appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Hardware, News, Linux computer, Linux PC, System76, Thelio Mira

Source: https://9to5linux.com/system76-launches-new-cosmic-powered-thelio-mira-high-performance-linux-pc Mar 17, 2026, 05:36 PM

System76 launches the next-generation Thelio Mira Linux desktop computer with Pop!_OS Linux 24.04 LTS and the COSMIC desktop environment.

The post System76 Launches New COSMIC-Powered Thelio Mira High-Performance Linux PC appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Hardware, News, Linux computer, Linux PC, System76, Thelio Mira

Source: https://9to5linux.com/system76-launches-new-cosmic-powered-thelio-mira-high-performance-linux-pc Mar 17, 2026, 05:36 PM

#54

9to5Linux / TUXEDO Gemini 17 Gen4 Linux L...

Last post by tim - Mar 18, 2026, 04:20 PMTUXEDO Gemini 17 Gen4 Linux Laptop Now Available with AMD Ryzen 9 9955HX

TUXEDO Gemini 17 Gen4 Linux laptop is now available with AMD Ryzen 9 9955HX, NVIDIA GeForce RTX 5070 Ti, up to 128 GB RAM, and up to 16TB storage.

The post TUXEDO Gemini 17 Gen4 Linux Laptop Now Available with AMD Ryzen 9 9955HX appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Hardware, News, Linux laptop, Linux notebook, TUXEDO Gemini 17

Source: https://9to5linux.com/tuxedo-gemini-17-gen4-linux-laptop-now-available-with-amd-ryzen-9-9955hx Mar 17, 2026, 03:36 PM

TUXEDO Gemini 17 Gen4 Linux laptop is now available with AMD Ryzen 9 9955HX, NVIDIA GeForce RTX 5070 Ti, up to 128 GB RAM, and up to 16TB storage.

The post TUXEDO Gemini 17 Gen4 Linux Laptop Now Available with AMD Ryzen 9 9955HX appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Hardware, News, Linux laptop, Linux notebook, TUXEDO Gemini 17

Source: https://9to5linux.com/tuxedo-gemini-17-gen4-linux-laptop-now-available-with-amd-ryzen-9-9955hx Mar 17, 2026, 03:36 PM

#55

9to5Linux / KDE Plasma 6.6.3 Makes KWin’s...

Last post by tim - Mar 18, 2026, 04:20 PMKDE Plasma 6.6.3 Makes KWin's Screencasting Feature More Robust for PipeWire 1.6

KDE Plasma 6.6.3 is now available as the third maintenance update in the KDE Plasma 6.6 desktop environment series with various improvements and bug fixes.

The post KDE Plasma 6.6.3 Makes KWin's Screencasting Feature More Robust for PipeWire 1.6 appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Desktops, News, desktop environment, KDE, KDE Plasma, KDE Plasma 6.6

Source: https://9to5linux.com/kde-plasma-6-6-3-makes-kwins-screencasting-feature-more-robust-for-pipewire-1-6 Mar 17, 2026, 02:41 PM

KDE Plasma 6.6.3 is now available as the third maintenance update in the KDE Plasma 6.6 desktop environment series with various improvements and bug fixes.

The post KDE Plasma 6.6.3 Makes KWin's Screencasting Feature More Robust for PipeWire 1.6 appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Desktops, News, desktop environment, KDE, KDE Plasma, KDE Plasma 6.6

Source: https://9to5linux.com/kde-plasma-6-6-3-makes-kwins-screencasting-feature-more-robust-for-pipewire-1-6 Mar 17, 2026, 02:41 PM

#56

9to5Linux / Blender 5.1 Open-Source 3D Gr...

Last post by tim - Mar 18, 2026, 04:20 PMBlender 5.1 Open-Source 3D Graphics Software Released with Many New Features

Blender 5.1 open-source 3D graphics software is now available for download with hardware ray-tracing by for AMD GPUs and other changes. Here's what's new!

The post Blender 5.1 Open-Source 3D Graphics Software Released with Many New Features appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Apps, News, 3D graphics, 3D modeling, Blender

Source: https://9to5linux.com/blender-5-1-open-source-3d-graphics-software-released-with-many-new-features Mar 17, 2026, 01:11 PM

Blender 5.1 open-source 3D graphics software is now available for download with hardware ray-tracing by for AMD GPUs and other changes. Here's what's new!

The post Blender 5.1 Open-Source 3D Graphics Software Released with Many New Features appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Apps, News, 3D graphics, 3D modeling, Blender

Source: https://9to5linux.com/blender-5-1-open-source-3d-graphics-software-released-with-many-new-features Mar 17, 2026, 01:11 PM

#57

9to5Linux / FFmpeg 8.1 “Hoare” Multimedia...

Last post by tim - Mar 18, 2026, 04:20 PMFFmpeg 8.1 "Hoare" Multimedia Framework Brings D3D12 H.264/AV1 Encoding

FFmpeg 8.1 open-source multimedia framework is now available for download with D3D12 H.264/AV1 encoding, xHE-AAC Mps212 MPEG-H decoder, and more. Here's what's new!

The post FFmpeg 8.1 "Hoare" Multimedia Framework Brings D3D12 H.264/AV1 Encoding appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Apps, News, FFmpeg, multimedia framework

Source: https://9to5linux.com/ffmpeg-8-1-hoare-multimedia-framework-brings-d3d12-h-264-av1-encoding Mar 16, 2026, 10:03 PM

FFmpeg 8.1 open-source multimedia framework is now available for download with D3D12 H.264/AV1 encoding, xHE-AAC Mps212 MPEG-H decoder, and more. Here's what's new!

The post FFmpeg 8.1 "Hoare" Multimedia Framework Brings D3D12 H.264/AV1 Encoding appeared first on 9to5Linux - do not reproduce this article without permission. This RSS feed is intended for readers, not scrapers.

Categories: Apps, News, FFmpeg, multimedia framework

Source: https://9to5linux.com/ffmpeg-8-1-hoare-multimedia-framework-brings-d3d12-h-264-av1-encoding Mar 16, 2026, 10:03 PM

#58

Ubuntu Blog / Building a dry-run mode for t...

Last post by tim - Mar 18, 2026, 04:20 PMBuilding a dry-run mode for the OpenTelemetry Collector

Teams continuously deploy programmable telemetry pipelines to production, without having access to a dry-run mode. At the same time, most organizations lack staging environments that resemble production – especially with regards to observability and other platform-level services. Despite knowing the potential risks involved due to the lack of a proper safety harness, most teams have no alternative, safe way to determine what telemetry they can actually cut to improve their signal-to-noise ratio without running the risk of missing something important.

So, teams resort to experimenting on live traffic.

What starts as a storage problem quickly becomes a cost problem due to the sheer volume of data stored. Not a "we should probably look into that," problem, but a "this line item is rivaling our compute spend," problem.

Programmable infrastructure usually ships with a way to preview change. Terraform has plan. Databases have EXPLAIN. Compilers have warnings. The OpenTelemetry Collector unfortunately has none of that. Software engineers edit YAML, reload the process, and cross their fingers they didn't just delete something they'll need during an incident. There are proprietary solutions available that help with noise reduction and cost analysis, but nothing in the open-source space.

This gap is what led me to build Signal Studio .

Signal Studio adds a diagnostic layer to OpenTelemetry Collectors – something closer to a "dry-run" or "plan" mode for telemetry pipelines. It does this by combining static configuration analysis with live metrics and an ephemeral OpenTelemetry Protocol (OTLP) tap to evaluate filter behavior against observed traffic.

The problem with "just add more filters"

If you've operated OpenTelemetry Collectors at any meaningful scale, you've probably had a conversation that goes something like this:

"Our telemetry costs are too high.""OK, let's add some filters.""Which metrics do we drop?" "...The ones we don't need?"

The issue isn't that teams don't want to reduce telemetry waste. It's that they lack visibility into what's actually flowing through the pipeline, which metrics are high-volume, which attributes are driving cardinality, and what a given filter expression would actually do. Sure – they can find it out by experimenting, but that's risky. If they get it wrong, they either risk dropping data they'll need during an incident, or not dropping enough data for it to meaningfully improve the signal-to-noise ratio.

Three tiers of insight

Signal Studio works in three layers. Each layer adds more context and enables increasingly specific recommendations.

Tier 1: Static analysis

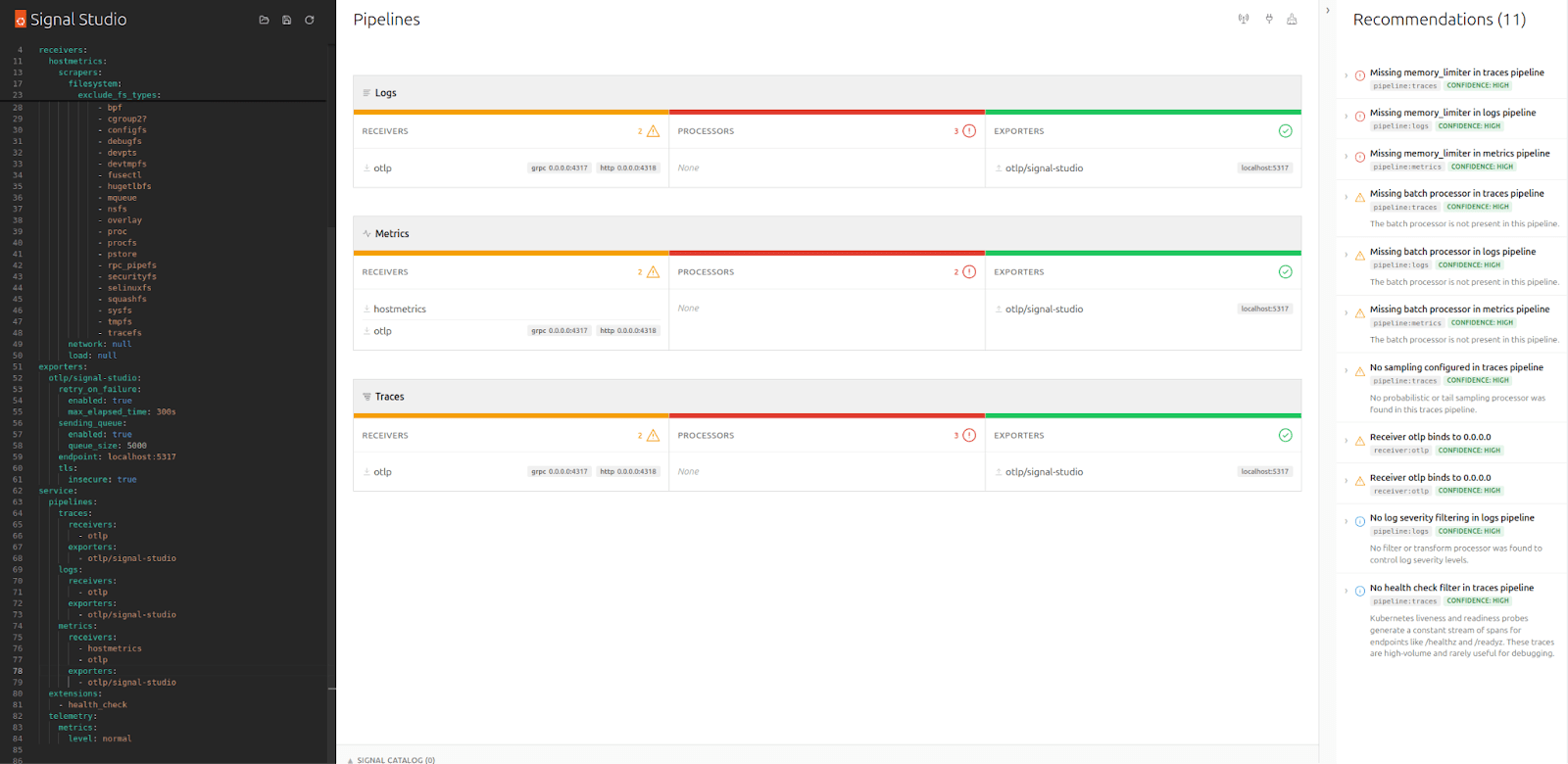

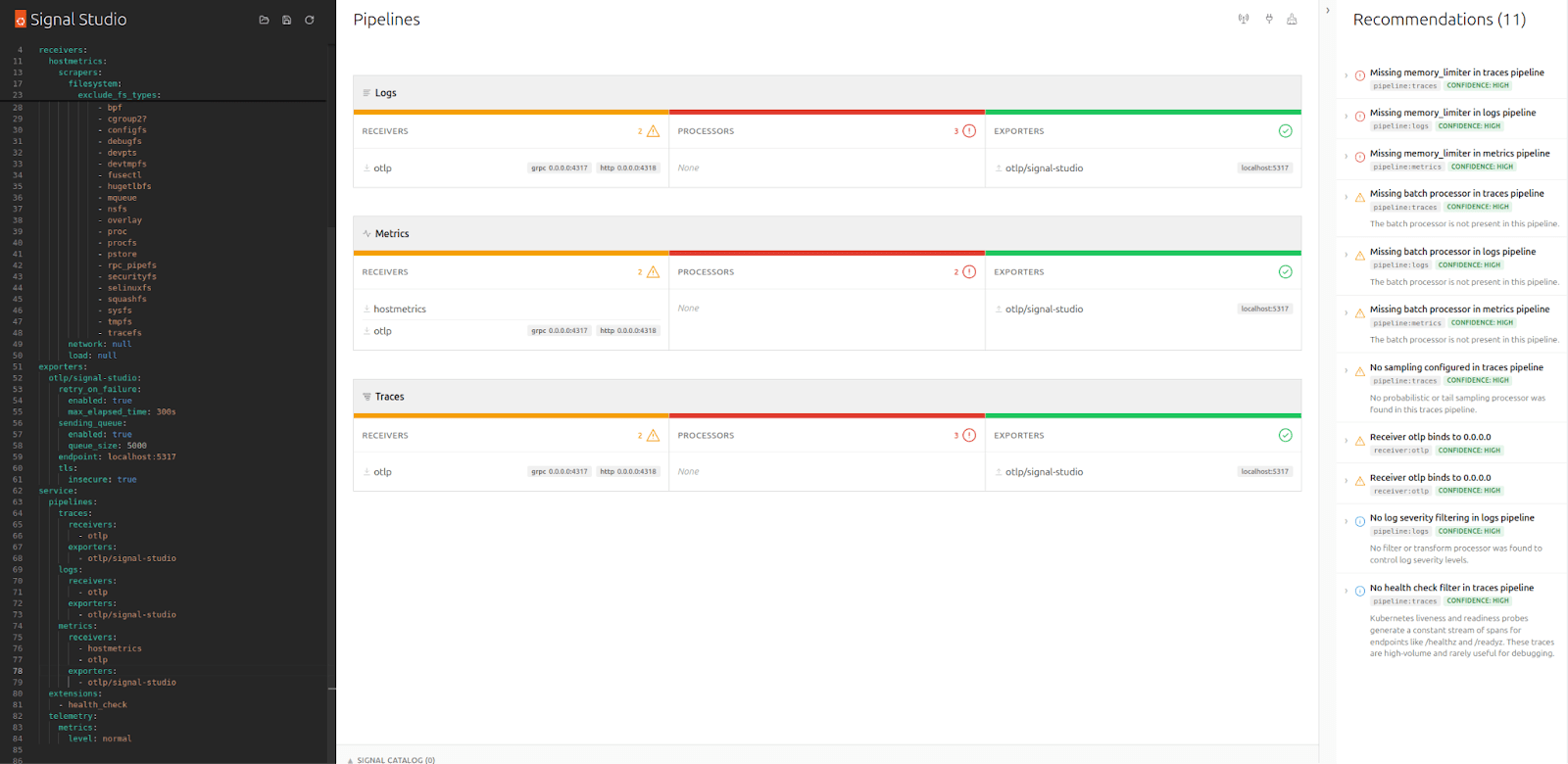

Overview of the User Interface

Overview of the User Interface

The first thing Signal Studio does is parse your Collector YAML and visualize the pipeline structure – receivers, processors, exporters – which it groups by signal type. In addition, it runs a set of static rules that catch common misconfiguration such as missing memory_limiters, undefined components, and unbounded receivers.

For each finding, the tool presents a severity, an explanation of why it matters, and a small YAML snippet suggesting a potential fix. At this point, no Collector connection is needed, nor is any alteration to your live configuration, making it very low risk compared to experimenting on live traffic.

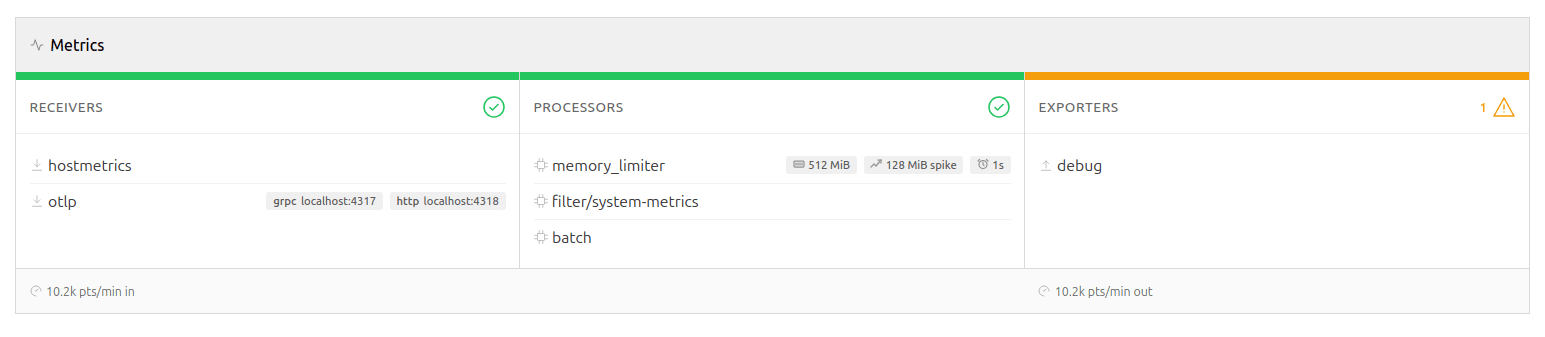

Tier 2: Live metrics

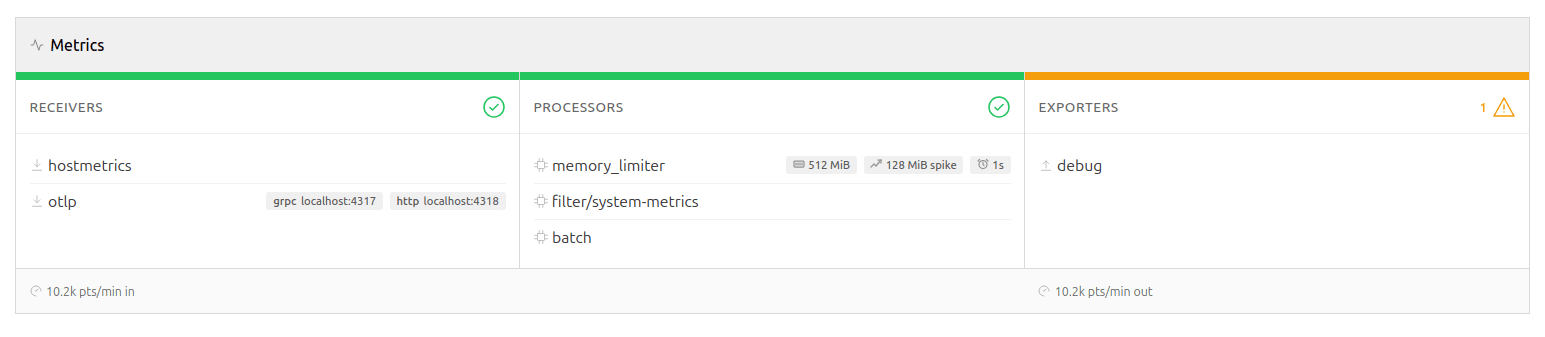

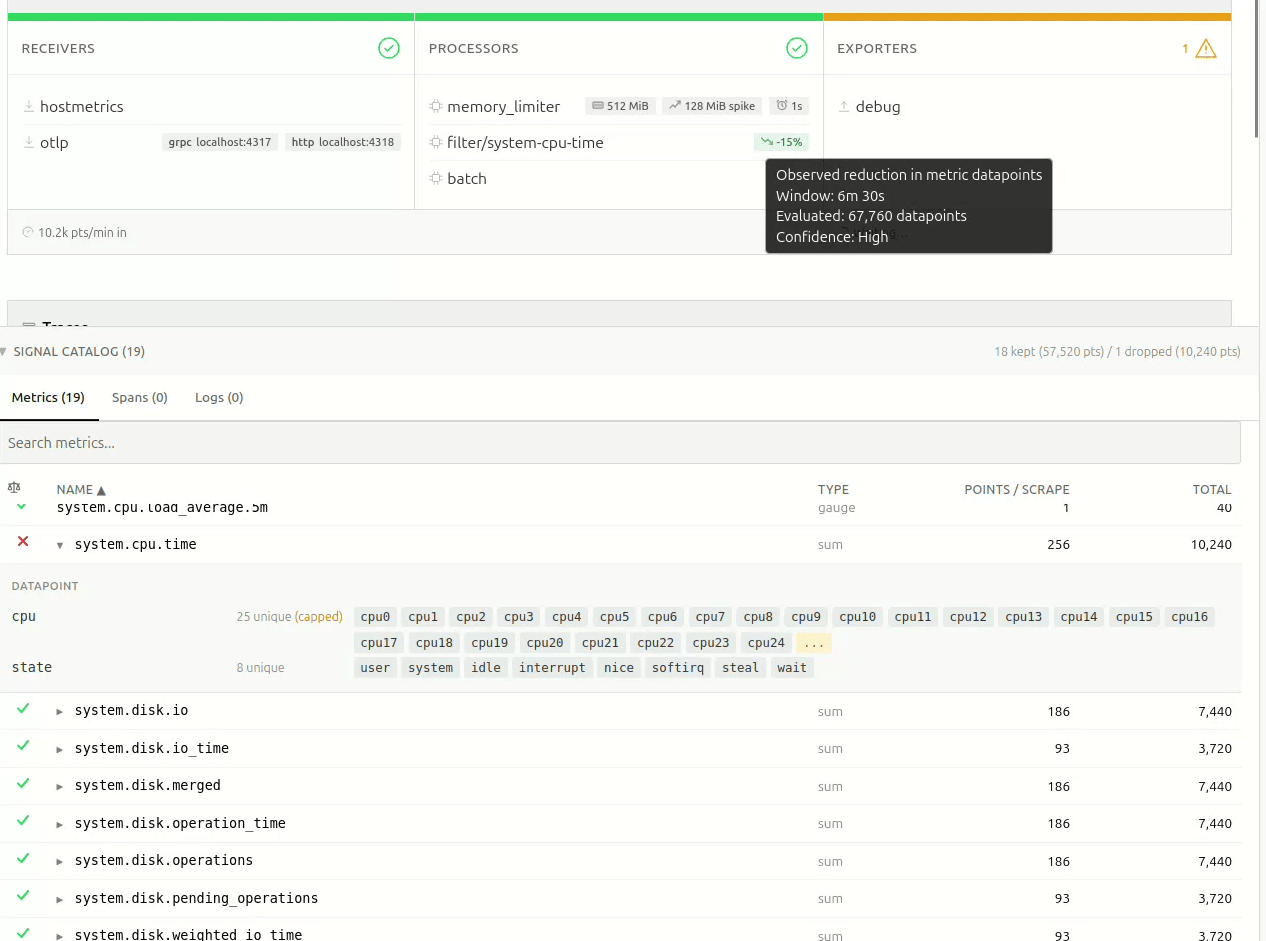

Pipeline overview with live metrics

Pipeline overview with live metrics

While the tool's static analysis can tell you what you configured, it won't be able to answer what's actually happening in your pipeline. Connecting Signal Studio to your Collector's metrics endpoint adds a live dimension.

The pipeline diagram lights up with per-signal throughput rates, drop percentages, and exporter queue utilization. This enables additional rules to detect high drop rates, log volume dominance, queue saturation, and receiver-exporter rate mismatches. These are runtime concerns that YAML analysis won't be able to reveal.

While this does require a connection to be established to the Collector, it remains read-only, ensuring the blast-radius is kept to a minimum and that it won't be able to cause any harm to your live deployment. Signal Studio polls the metrics endpoint at a configurable interval and computes rates server-side. If the connection drops, the static linting and any findings discovered so far remain in place until a connection is available again.

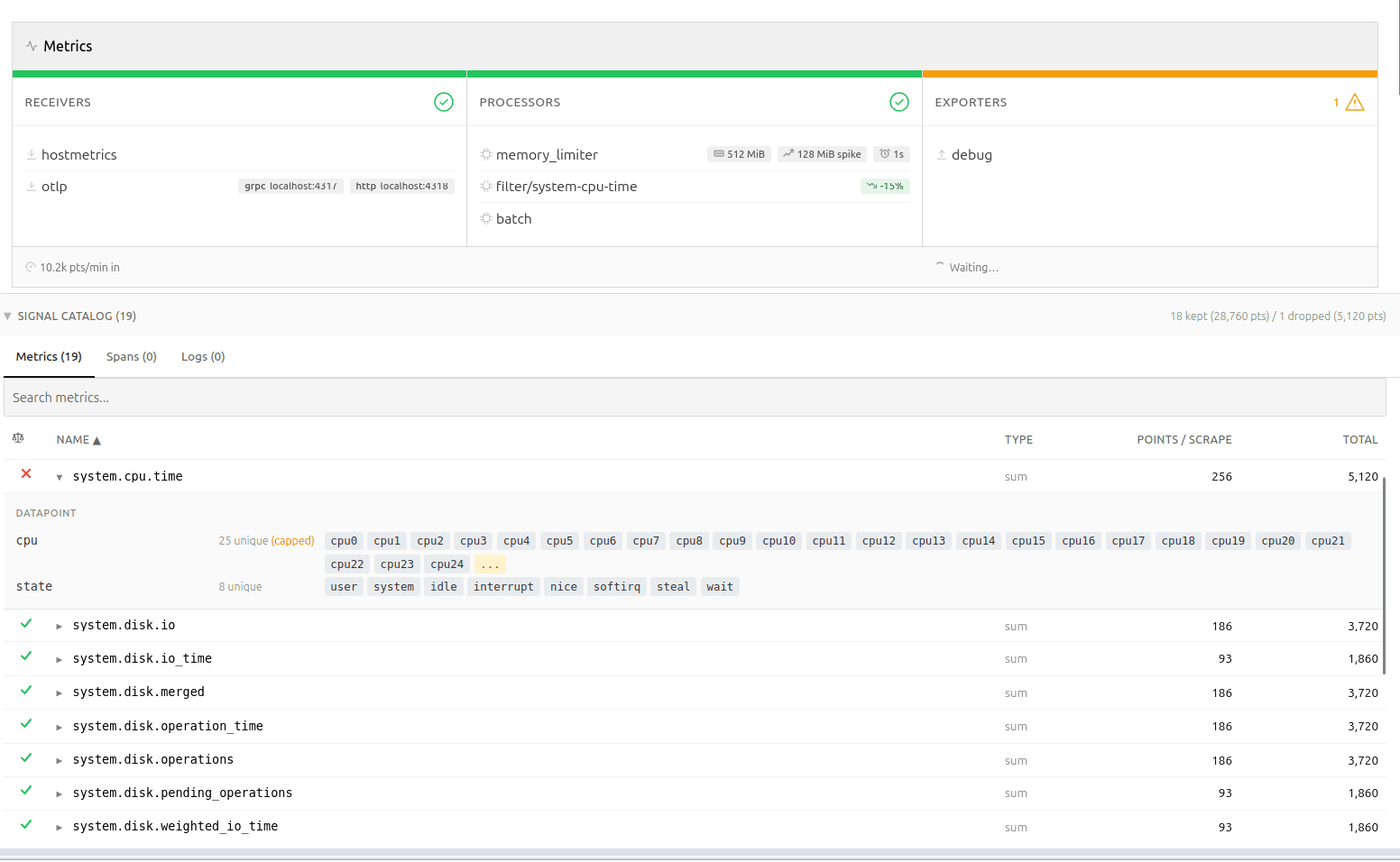

Tier 3: The OTLP sampling tap

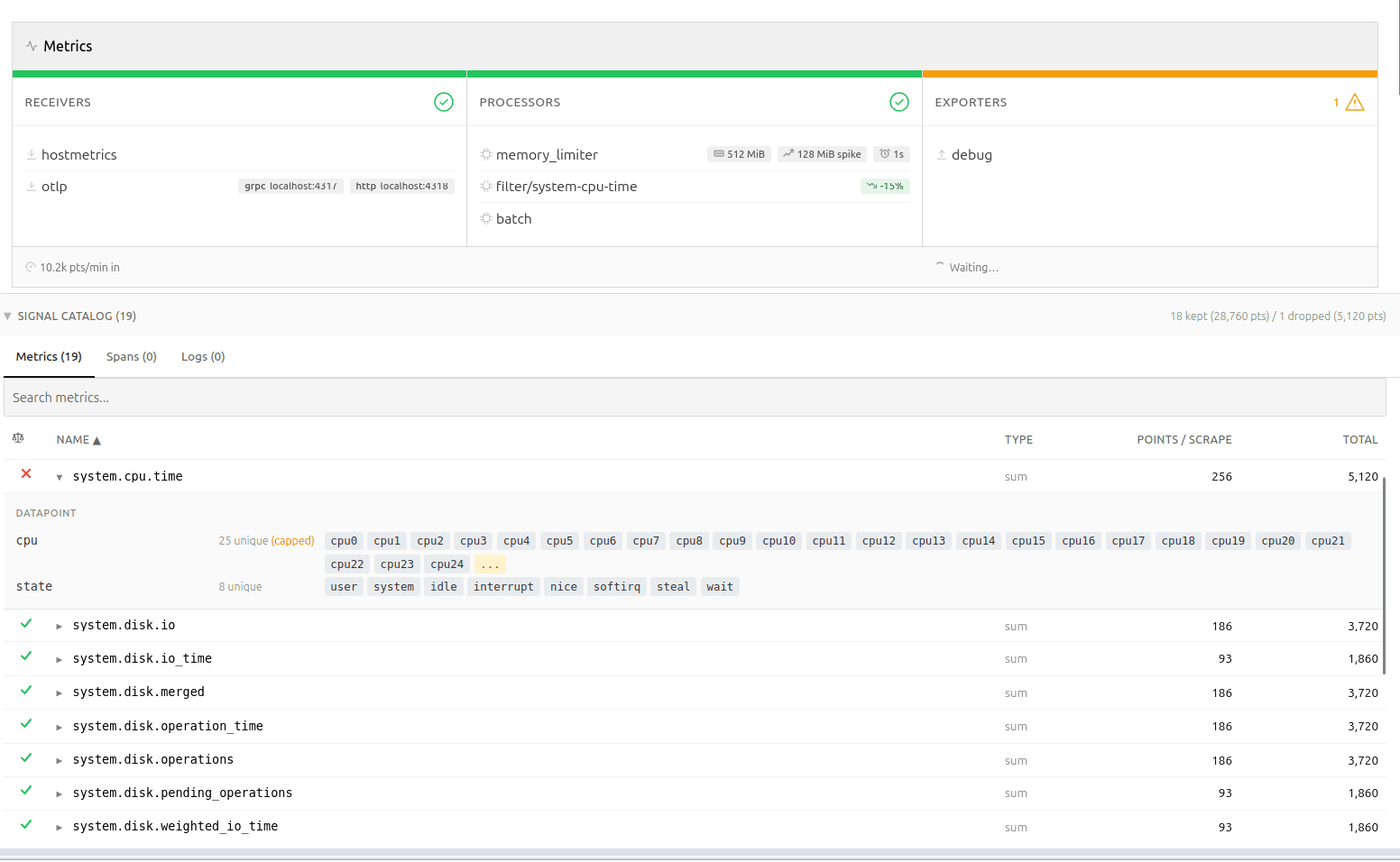

Signal catalog presenting the sampling results

Signal catalog presenting the sampling results

This is where it gets interesting.

Signal Studio can spin up a lightweight OTLP receiver, supporting both gRPC and HTTP, allowing you to tap into your telemetry using a fan-out exporter in your Collector config. Once telemetry starts flowing, Signal Studio catalogs metric names, types, point counts, and – most importantly – attribute metadata. It is worth repeating at this point that nothing is persisted, the catalog is only stored in-memory.

Querying the backend

"Why not just query the backend?" You may wonder. This is a legitimate question, and while that is certainly possible, the approach suffers from one critical flaw. The backend sees metrics from every source, not just this Collector.

As you can imagine, attribution in the backend becomes really tricky unless you enrich the data points with additional metadata. That approach is invasive and leaves attribution artifacts in your backend. It also pushes analysis away from the pipeline you actually need to change.

Memory predictability

The attribute tracker is bounded by design: it stores up to 25 sample values per attribute key per metric, then switches to count-only mode. This keeps memory predictable, while still providing enough data for meaningful recommendations.

If a filter expression references an attribute value that wasn't observed within the bounded window, the result becomes "unknown", rather than extrapolated. The tool does not attempt to guess unseen values or predict long-tail behavior. This is a bounded simulation over observed traffic, not a probabilistic estimator.

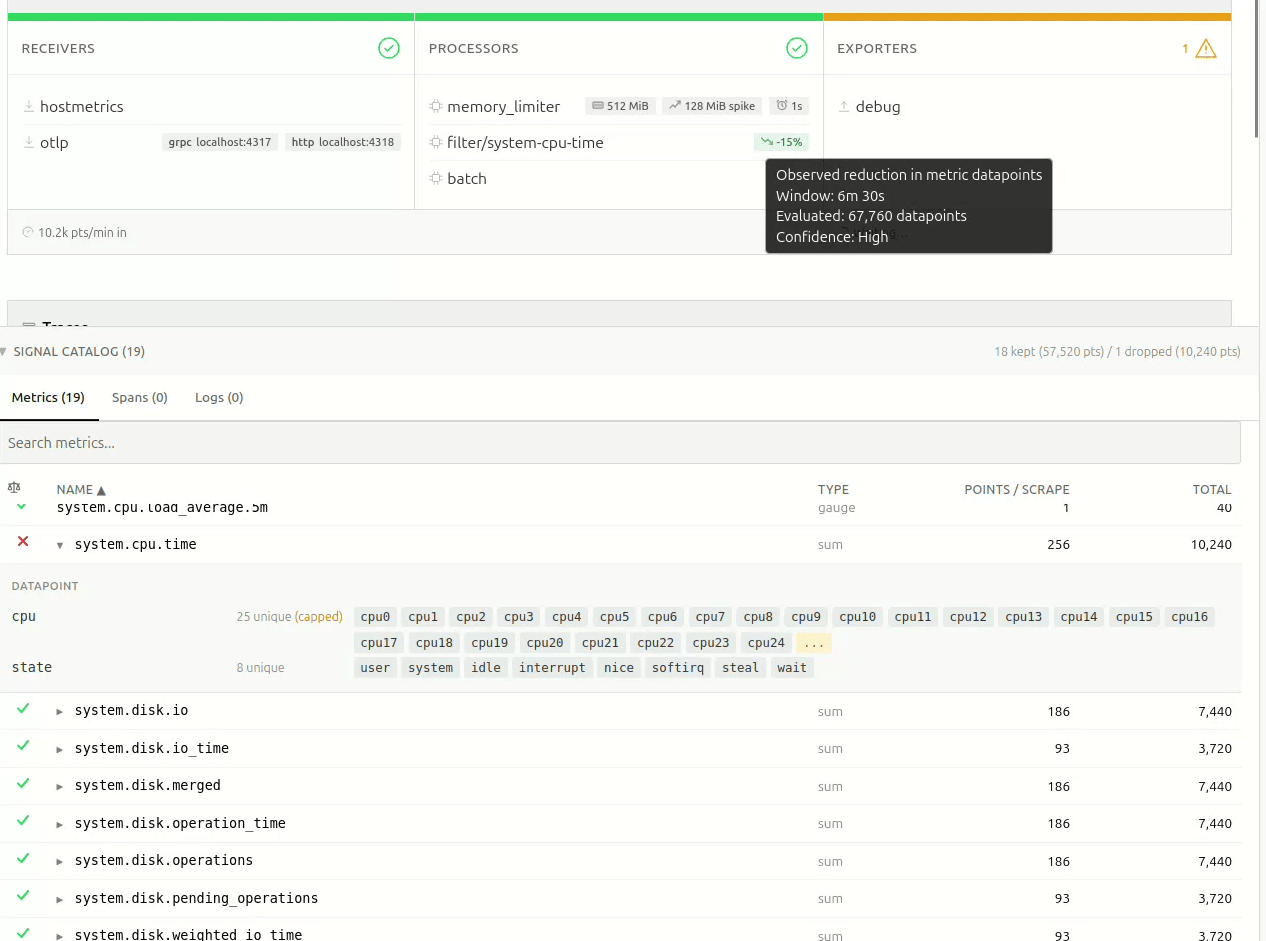

Filter analysis for your pipeline

Telemetry volume change estimations presented live in the interface

Telemetry volume change estimations presented live in the interface

With metric names and attributes discovered, Signal Studio can do something that isn't possible with static analysis alone: predict what a filter processor would do, before you apply it.

It parses both legacy include/exclude syntax and modern OpenTelemetry Transformation Language (OTTL) expressions, like name == "...", IsMatch(name, "..."), attribute equality, regex patterns, and HasAttrKeyOnDatapoint.

For each discovered metric, the tool predicts whether the filter would keep it or drop it – or whether it just can't tell, because the attribute was of too high cardinality, and the sample window didn't capture the relevant values.

The result shows up as a pill on the pipeline card: something like -34%, meaning "this filter would reduce datapoint volume by roughly 34%, based on what we've observed." Hover over it, and you immediately get the full picture – the observation window, total data points evaluated, and a confidence level based on sample size.

The confidence calculation is simple and deliberate: fewer than 5,000 observed data points are considered "Low", 5k-50k is "Medium", and above 50k is "High". No false precision. The thresholds are intentionally heuristic and visible; the goal is to communicate window size, not statistical certainty.

If filters are already applied upstream in the pipeline, those data points never reach the tap, and their impact cannot be evaluated.

Recommendations that know your data

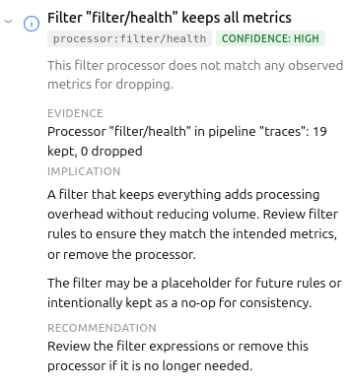

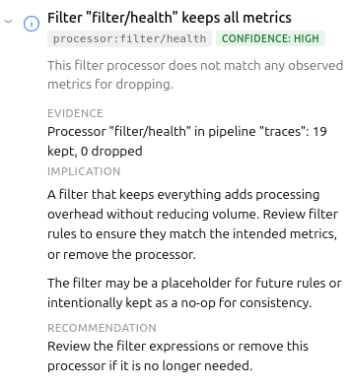

Example recommendation

Example recommendation

The third rule tier – catalog rules – is where static analysis meets live discovery. These rules fire only when actual metric data is available, enabling findings like cardinality risks due to high attribute counts, certain metrics dominating the data, or Collector-internal telemetry being kept.

These rules shift the conversation from "you should probably add a filter", to "metric X has a high-cardinality http.route attribute and is a strong candidate for attribute reduction." In several production deployments I tested, single metrics accounted for ~60% of total telemetry volume through the Collector. That's difficult to reason about by reading YAML alone.

Precise recommendations

Recommendations are only useful if the reader can understand and assess them quickly. They need to be succinct, precise, and consistent.

Signal Studio uses a simple model: evidence → implication → recommendation, wrapped in a confidence indicator and scope tag.

The goal is to make it easy to decide whether to act or defer.

Overarching goals

Working on the first iteration of Signal Studio, I made a few choices that shaped the tool:

Deliberately Read-only.

Signal Studio never writes to your Collector. The OTLP tap is opt-in, and requires you to modify your own config. This was a deliberate decision – the tool should be safe to point at production, without anyone losing sleep.

Similarly, there's no "apply" button, no GitOps integration, no deployment pipeline. The user stays in control. This might seem like a missing feature, but it's a deliberate scope boundary – the tool diagnoses, the human decides. While every actionable finding includes a YAML snippet you can copy directly into your Collector config, you are the one who decides if, and when, that ever makes it into your pipelines.

Single binary, no persistence

Everything lives in memory. No database, no state files. This makes deployment trivial, and keeps the blast radius small. At this point, I don't want to have to bother with the security aspects of running a shadow telemetry data store with all the PII implications and associated complexity that comes with it – and, almost certainly, neither do you.

Honest about uncertainty

As an example: when the attribute tracker caps out at 25 sample values, the filter analysis returns "unknown," instead of guessing. The projection pills show confidence levels. Findings include caveats when the recommendation depends on context (like container networking making 0.0.0.0 binding necessary).

Overpromising erodes trust faster than under-delivering, and when it comes to observability, you often only get one shot at making promises – rightfully so.

What's next?

The tool already does what I initially envisioned, and will only gain new features if they are considered useful enough. There are open questions around alert validation, PII detection, and cardinality estimation. I'll explore those separately.Programmable infrastructure without a diagnostics layer feels incomplete, and while I believe the outcome of this experiment has been surprisingly positive,

I'm curious how others are validating the impact of filter changes before deploying them to production OpenTelemetry Collectors?

You can reach us in the project repository , or on the Ubuntu Community Matrix .

Teams continuously deploy programmable telemetry pipelines to production, without having access to a dry-run mode. At the same time, most organizations lack staging environments that resemble production – especially with regards to observability and other platform-level services. Despite knowing the potential risks involved due to the lack of a proper safety harness, most teams have [...]

Categories: observability, OpenTelemetry, signal studio, Telemetry

Source: https://ubuntu.com//blog/building-a-dry-run-mode-for-the-opentelemetry-collector Mar 17, 2026, 05:56 PM

Teams continuously deploy programmable telemetry pipelines to production, without having access to a dry-run mode. At the same time, most organizations lack staging environments that resemble production – especially with regards to observability and other platform-level services. Despite knowing the potential risks involved due to the lack of a proper safety harness, most teams have no alternative, safe way to determine what telemetry they can actually cut to improve their signal-to-noise ratio without running the risk of missing something important.

So, teams resort to experimenting on live traffic.

What starts as a storage problem quickly becomes a cost problem due to the sheer volume of data stored. Not a "we should probably look into that," problem, but a "this line item is rivaling our compute spend," problem.

Programmable infrastructure usually ships with a way to preview change. Terraform has plan. Databases have EXPLAIN. Compilers have warnings. The OpenTelemetry Collector unfortunately has none of that. Software engineers edit YAML, reload the process, and cross their fingers they didn't just delete something they'll need during an incident. There are proprietary solutions available that help with noise reduction and cost analysis, but nothing in the open-source space.

This gap is what led me to build Signal Studio .

Signal Studio adds a diagnostic layer to OpenTelemetry Collectors – something closer to a "dry-run" or "plan" mode for telemetry pipelines. It does this by combining static configuration analysis with live metrics and an ephemeral OpenTelemetry Protocol (OTLP) tap to evaluate filter behavior against observed traffic.

The problem with "just add more filters"

If you've operated OpenTelemetry Collectors at any meaningful scale, you've probably had a conversation that goes something like this:

"Our telemetry costs are too high.""OK, let's add some filters.""Which metrics do we drop?" "...The ones we don't need?"

The issue isn't that teams don't want to reduce telemetry waste. It's that they lack visibility into what's actually flowing through the pipeline, which metrics are high-volume, which attributes are driving cardinality, and what a given filter expression would actually do. Sure – they can find it out by experimenting, but that's risky. If they get it wrong, they either risk dropping data they'll need during an incident, or not dropping enough data for it to meaningfully improve the signal-to-noise ratio.

Three tiers of insight

Signal Studio works in three layers. Each layer adds more context and enables increasingly specific recommendations.

Tier 1: Static analysis

Overview of the User Interface

Overview of the User InterfaceThe first thing Signal Studio does is parse your Collector YAML and visualize the pipeline structure – receivers, processors, exporters – which it groups by signal type. In addition, it runs a set of static rules that catch common misconfiguration such as missing memory_limiters, undefined components, and unbounded receivers.

For each finding, the tool presents a severity, an explanation of why it matters, and a small YAML snippet suggesting a potential fix. At this point, no Collector connection is needed, nor is any alteration to your live configuration, making it very low risk compared to experimenting on live traffic.

Tier 2: Live metrics

Pipeline overview with live metrics

Pipeline overview with live metricsWhile the tool's static analysis can tell you what you configured, it won't be able to answer what's actually happening in your pipeline. Connecting Signal Studio to your Collector's metrics endpoint adds a live dimension.

The pipeline diagram lights up with per-signal throughput rates, drop percentages, and exporter queue utilization. This enables additional rules to detect high drop rates, log volume dominance, queue saturation, and receiver-exporter rate mismatches. These are runtime concerns that YAML analysis won't be able to reveal.

While this does require a connection to be established to the Collector, it remains read-only, ensuring the blast-radius is kept to a minimum and that it won't be able to cause any harm to your live deployment. Signal Studio polls the metrics endpoint at a configurable interval and computes rates server-side. If the connection drops, the static linting and any findings discovered so far remain in place until a connection is available again.

Tier 3: The OTLP sampling tap

Signal catalog presenting the sampling results

Signal catalog presenting the sampling resultsThis is where it gets interesting.

Signal Studio can spin up a lightweight OTLP receiver, supporting both gRPC and HTTP, allowing you to tap into your telemetry using a fan-out exporter in your Collector config. Once telemetry starts flowing, Signal Studio catalogs metric names, types, point counts, and – most importantly – attribute metadata. It is worth repeating at this point that nothing is persisted, the catalog is only stored in-memory.

Querying the backend

"Why not just query the backend?" You may wonder. This is a legitimate question, and while that is certainly possible, the approach suffers from one critical flaw. The backend sees metrics from every source, not just this Collector.

As you can imagine, attribution in the backend becomes really tricky unless you enrich the data points with additional metadata. That approach is invasive and leaves attribution artifacts in your backend. It also pushes analysis away from the pipeline you actually need to change.

Memory predictability

The attribute tracker is bounded by design: it stores up to 25 sample values per attribute key per metric, then switches to count-only mode. This keeps memory predictable, while still providing enough data for meaningful recommendations.

If a filter expression references an attribute value that wasn't observed within the bounded window, the result becomes "unknown", rather than extrapolated. The tool does not attempt to guess unseen values or predict long-tail behavior. This is a bounded simulation over observed traffic, not a probabilistic estimator.

Filter analysis for your pipeline

Telemetry volume change estimations presented live in the interface

Telemetry volume change estimations presented live in the interfaceWith metric names and attributes discovered, Signal Studio can do something that isn't possible with static analysis alone: predict what a filter processor would do, before you apply it.

It parses both legacy include/exclude syntax and modern OpenTelemetry Transformation Language (OTTL) expressions, like name == "...", IsMatch(name, "..."), attribute equality, regex patterns, and HasAttrKeyOnDatapoint.

For each discovered metric, the tool predicts whether the filter would keep it or drop it – or whether it just can't tell, because the attribute was of too high cardinality, and the sample window didn't capture the relevant values.

The result shows up as a pill on the pipeline card: something like -34%, meaning "this filter would reduce datapoint volume by roughly 34%, based on what we've observed." Hover over it, and you immediately get the full picture – the observation window, total data points evaluated, and a confidence level based on sample size.

The confidence calculation is simple and deliberate: fewer than 5,000 observed data points are considered "Low", 5k-50k is "Medium", and above 50k is "High". No false precision. The thresholds are intentionally heuristic and visible; the goal is to communicate window size, not statistical certainty.

If filters are already applied upstream in the pipeline, those data points never reach the tap, and their impact cannot be evaluated.

Recommendations that know your data

Example recommendation

Example recommendationThe third rule tier – catalog rules – is where static analysis meets live discovery. These rules fire only when actual metric data is available, enabling findings like cardinality risks due to high attribute counts, certain metrics dominating the data, or Collector-internal telemetry being kept.

These rules shift the conversation from "you should probably add a filter", to "metric X has a high-cardinality http.route attribute and is a strong candidate for attribute reduction." In several production deployments I tested, single metrics accounted for ~60% of total telemetry volume through the Collector. That's difficult to reason about by reading YAML alone.

Precise recommendations

Recommendations are only useful if the reader can understand and assess them quickly. They need to be succinct, precise, and consistent.

Signal Studio uses a simple model: evidence → implication → recommendation, wrapped in a confidence indicator and scope tag.

The goal is to make it easy to decide whether to act or defer.

Overarching goals

Working on the first iteration of Signal Studio, I made a few choices that shaped the tool:

Deliberately Read-only.

Signal Studio never writes to your Collector. The OTLP tap is opt-in, and requires you to modify your own config. This was a deliberate decision – the tool should be safe to point at production, without anyone losing sleep.

Similarly, there's no "apply" button, no GitOps integration, no deployment pipeline. The user stays in control. This might seem like a missing feature, but it's a deliberate scope boundary – the tool diagnoses, the human decides. While every actionable finding includes a YAML snippet you can copy directly into your Collector config, you are the one who decides if, and when, that ever makes it into your pipelines.

Single binary, no persistence

Everything lives in memory. No database, no state files. This makes deployment trivial, and keeps the blast radius small. At this point, I don't want to have to bother with the security aspects of running a shadow telemetry data store with all the PII implications and associated complexity that comes with it – and, almost certainly, neither do you.

Honest about uncertainty

As an example: when the attribute tracker caps out at 25 sample values, the filter analysis returns "unknown," instead of guessing. The projection pills show confidence levels. Findings include caveats when the recommendation depends on context (like container networking making 0.0.0.0 binding necessary).

Overpromising erodes trust faster than under-delivering, and when it comes to observability, you often only get one shot at making promises – rightfully so.

What's next?

The tool already does what I initially envisioned, and will only gain new features if they are considered useful enough. There are open questions around alert validation, PII detection, and cardinality estimation. I'll explore those separately.Programmable infrastructure without a diagnostics layer feels incomplete, and while I believe the outcome of this experiment has been surprisingly positive,

I'm curious how others are validating the impact of filter changes before deploying them to production OpenTelemetry Collectors?

You can reach us in the project repository , or on the Ubuntu Community Matrix .

Teams continuously deploy programmable telemetry pipelines to production, without having access to a dry-run mode. At the same time, most organizations lack staging environments that resemble production – especially with regards to observability and other platform-level services. Despite knowing the potential risks involved due to the lack of a proper safety harness, most teams have [...]

Categories: observability, OpenTelemetry, signal studio, Telemetry

Source: https://ubuntu.com//blog/building-a-dry-run-mode-for-the-opentelemetry-collector Mar 17, 2026, 05:56 PM

#59

Ubuntu Blog / Canonical announces it will d...

Last post by tim - Mar 18, 2026, 04:20 PMCanonical announces it will distribute NVIDIA DOCA-OFED in Ubuntu

Following the recent announcement regarding the distribution of theNVIDIA CUDA toolkit , Canonical, the publishers of Ubuntu, will integrate and distribute the NVIDIA DOCA-OFED networking driver with Ubuntu, further accelerating the enablement of NVIDIA platforms.

NVIDIA DOCA-OFED is a widely adopted high-performance networking stack, commonly used in large-scale AI factories and HPC clusters. By exposing advanced capabilities like RDMA (remote direct memory access) and NVIDIA GPUDirect , NVIDIA DOCA-OFED provides CPU offload, lower and more predictable tail latency, and sustained throughput under load. This enables ultra-low latency and high-throughput data transfers – essential for training large language models (LLMs) and running complex distributed simulations. DOCA‑OFED is delivered as the DOCA‑Host networking driver stack, including kernel drivers, user‑space libraries, and management tools for supported NVIDIA network adapters.

As data center workloads move toward massive-scale AI and HPC, the underlying networking fabric becomes the critical bottleneck. By bringing NVIDIA DOCA-OFED directly into Ubuntu, Canonical will enable infrastructure architects and developers to build faster, more reliable, and more securely designed networks.

NVIDIA DOCA-OFED with a single command

Historically, system administrators had to manage networking drivers through external installers or complex manual builds, often leading to version conflicts or kernel mismatch issues during OS updates.

The new workflow will remove common operational pain points such as kernel drift, driver incompatibility, and CI breakage following kernel or OS upgrades. The distribution of NVIDIA DOCA-OFED through Ubuntu's repositories will enable a "single-command" installation experience.

Ubuntu is the world's most popular Linux distribution for the cloud and edge. Organizations choosing Ubuntu benefit from a platform that is trusted and easy to use:

Ubuntu Pro is free for personal use on up to 5 machines, and enterprises can try it for free for 30 days.

Learn more about Ubuntu Pro

Making high-performance networking operationally sustainable

High-performance networking has quietly become one of the hardest operational problems in modern data centers, but by distributing NVIDIA DOCA-OFED in Ubuntu, Canonical is helping enterprises address the problem. Infrastructure teams no longer need to choose between speed and stability, or between vendor innovation and OS consistency.

For operators building AI factories, HPC clusters, or accelerated cloud platforms, this integration offers a cleaner supply chain, simpler upgrades, and a networking stack that evolves in parallel with the operating system. It is a pragmatic step toward making high-performance networking a first-class, operationally manageable component of modern Linux infrastructure.

About Canonical

Canonical, the publisher of Ubuntu, provides open source security, support, and services. Our portfolio covers critical systems, from the smallest devices to the largest clouds, from the kernel to containers, from databases to AI. With customers that include top tech brands, emerging startups, governments and home users, Canonical delivers trusted open source for everyone.

Learn more at https://canonical.com/

Today Canonical, the publishers of Ubuntu, announced that it will integrate and distribute the NVIDIA DOCA-OFED networking driver with Ubuntu.

Categories: nvidia, Ubuntu

Source: https://ubuntu.com//blog/canonical-announces-it-will-distribute-nvidia-doca-ofed-in-ubuntu Mar 17, 2026, 12:00 AM

Following the recent announcement regarding the distribution of theNVIDIA CUDA toolkit , Canonical, the publishers of Ubuntu, will integrate and distribute the NVIDIA DOCA-OFED networking driver with Ubuntu, further accelerating the enablement of NVIDIA platforms.

NVIDIA DOCA-OFED is a widely adopted high-performance networking stack, commonly used in large-scale AI factories and HPC clusters. By exposing advanced capabilities like RDMA (remote direct memory access) and NVIDIA GPUDirect , NVIDIA DOCA-OFED provides CPU offload, lower and more predictable tail latency, and sustained throughput under load. This enables ultra-low latency and high-throughput data transfers – essential for training large language models (LLMs) and running complex distributed simulations. DOCA‑OFED is delivered as the DOCA‑Host networking driver stack, including kernel drivers, user‑space libraries, and management tools for supported NVIDIA network adapters.

As data center workloads move toward massive-scale AI and HPC, the underlying networking fabric becomes the critical bottleneck. By bringing NVIDIA DOCA-OFED directly into Ubuntu, Canonical will enable infrastructure architects and developers to build faster, more reliable, and more securely designed networks.

NVIDIA DOCA-OFED with a single command

Historically, system administrators had to manage networking drivers through external installers or complex manual builds, often leading to version conflicts or kernel mismatch issues during OS updates.

The new workflow will remove common operational pain points such as kernel drift, driver incompatibility, and CI breakage following kernel or OS upgrades. The distribution of NVIDIA DOCA-OFED through Ubuntu's repositories will enable a "single-command" installation experience.

- Ubuntu integration: NVIDIA DOCA-OFED will be included in Ubuntu's repositories, ensuring that drivers are tested and optimized for the specific Ubuntu kernel you are running.

- Simplified lifecycle management: Once integrated, application developers and system administrators can manage networking updates through standard apt commands, significantly reducing the operational overhead of maintaining high-performance clusters.

- Predictable deployments: Organizations can now declare networking dependencies in their automation scripts, with Ubuntu managing the installation and hardware compatibility across diverse NVIDIA networking hardware.

Ubuntu is the world's most popular Linux distribution for the cloud and edge. Organizations choosing Ubuntu benefit from a platform that is trusted and easy to use:

- Long-term support (LTS): Ubuntu offers LTS releases, which are guaranteed to receive security updates for at least five years.

- Familiar Tooling: Using the Advanced Package Tool (APT), projects can scale from a single developer workstation to thousands of decentralized nodes while preserving the integrity of the open source software supply chain.

- Ubuntu Pro: Ubuntu Pro is Canonical's comprehensive subscription for open source security, support, and compliance. It gives you access to a trusted open source repository, compliance profiles for the most stringent security standards, and up to 15 years of security maintenance. This ensures that even as networking standards evolve, your mission-critical infrastructure remains protected against emerging vulnerabilities.

Ubuntu Pro is free for personal use on up to 5 machines, and enterprises can try it for free for 30 days.

Learn more about Ubuntu Pro

Making high-performance networking operationally sustainable

High-performance networking has quietly become one of the hardest operational problems in modern data centers, but by distributing NVIDIA DOCA-OFED in Ubuntu, Canonical is helping enterprises address the problem. Infrastructure teams no longer need to choose between speed and stability, or between vendor innovation and OS consistency.

For operators building AI factories, HPC clusters, or accelerated cloud platforms, this integration offers a cleaner supply chain, simpler upgrades, and a networking stack that evolves in parallel with the operating system. It is a pragmatic step toward making high-performance networking a first-class, operationally manageable component of modern Linux infrastructure.

About Canonical

Canonical, the publisher of Ubuntu, provides open source security, support, and services. Our portfolio covers critical systems, from the smallest devices to the largest clouds, from the kernel to containers, from databases to AI. With customers that include top tech brands, emerging startups, governments and home users, Canonical delivers trusted open source for everyone.

Learn more at https://canonical.com/

Today Canonical, the publishers of Ubuntu, announced that it will integrate and distribute the NVIDIA DOCA-OFED networking driver with Ubuntu.

Categories: nvidia, Ubuntu

Source: https://ubuntu.com//blog/canonical-announces-it-will-distribute-nvidia-doca-ofed-in-ubuntu Mar 17, 2026, 12:00 AM

#60

Ubuntu Blog / Meet Canonical at NVIDIA GTC ...

Last post by tim - Mar 18, 2026, 04:20 PMMeet Canonical at NVIDIA GTC 2026

Previewing at the event: NVIDIA CUDA support in Ubuntu 26.04 LTS, NVIDIA Vera Rubin NVL72 architecture support in Ubuntu 26.04 LTS, Canonical's official Ubuntu image for NVIDIA Jetson Thor, upcoming support for NVIDIA DGX Station and NVIDIA DOCA-OFED, and NVIDIA RTX PRO 4500 support.

NVIDIA GTC 2026 is here, bringing together the technologies defining the next wave of AI innovation. Developers, researchers, and business leaders are converging to explore physical AI, AI factories, agentic AI, and high-performance inference. Across these rapidly evolving domains, one shared requirement remains constant: a secure, performant, and fully supported operating system that integrates seamlessly with NVIDIA AI infrastructure.

With Ubuntu 26.04 LTS, Canonical is continuing its collaboration with NVIDIA by directly distributing NVIDIA CUDA in Ubuntu, and by delivering day-one platform readiness for NVIDIA Vera Rubin NVL72 rack-scale architecture. Together, these advancements provide developers and enterprises with a stable, enterprise-grade foundation for building, scaling, and operating AI workloads across edge, workstation, and data center environments.

Sneak peek: what you can expect at the Canonical booth this year

Join the Canonical team at Booth #3017 to see how we're bridging the gap between AI experimentation and production. Our engineers will be on-site at the San Jose McEnery Convention Center demonstrating full-stack open source solutions, from optimized deployment workflows to real-world architectures.

Let's dive into what you can expect.

CUDA support in Ubuntu 26.04 LTS

Canonical will directly distribute NVIDIA CUDA within Ubuntu 26.04 LTS, simplifying how developers access and maintain GPU-accelerated computing environments. By integrating CUDA into the Ubuntu archive, Canonical will reduce dependency fragmentation and eliminate the need for manual toolkit management across heterogeneous fleets.

For AI engineers and data scientists, this means CUDA libraries, drivers, and associated components will align with Ubuntu's lifecycle enterprise support model. This integration strengthens Ubuntu as a trusted base layer for high-performance AI, HPC workloads, and accelerated data processing pipelines. Distributing CUDA within Ubuntu 26.04 LTS, ensures that developers can build once and deploy consistently, from local development environments to large-scale production clusters.

Vera Rubin NVL72 architecture support in Ubuntu 26.04 LTS

Ubuntu 26.04 LTS, which releases in April 2026, introduces platform readiness for NVIDIA's Vera Rubin NVL72 architecture, enabling early adoption of next-generation accelerated computing systems. Canonical is working closely with NVIDIA to ensure kernel, driver, and user-space integration are validated for enterprise deployment on Vera Rubin NVL72-based platforms.

This support provides a stable operating system foundation for customers adopting new GPU and system architectures as they emerge. By delivering coordinated enablement across drivers, firmware, and core system components, Ubuntu reduces configuration risk and accelerates time to production.

For organizations planning transitions to next-generation AI infrastructure, Ubuntu's Vera Rubin NVL72 readiness ensures continuity across development, testing, and deployment environments. Enterprises can adopt new NVIDIA hardware with confidence that long-term support is integrated into the platform.

Official Ubuntu 24.04 LTS image for NVIDIA Jetson Thor

Canonical will be on-hand to show you the official Ubuntu 24.04 LTS image for NVIDIA Jetson Thor . This certified Ubuntu image, developed in collaboration with NVIDIA, provides a stable, supported, and compliant operating system layer for production environments – including in robotics, physical AI, and real-time reasoning.

For users of the NVIDIA JetPack SDK and its integrated AI stack, the Ubuntu image for Jetson Thor completes the circle and ensures that they can move at pace to deployment, on a trusted, enterprise-grade foundation. Through Ubuntu Pro, Canonical's comprehensive subscription for open source security, developers benefit from up to 15 years of patching and maintenance for Ubuntu, as well as compliance tooling for frameworks including FIPS and DISA-STIG.

We'll have an Ubuntu image running on a NVIDIA Jetson Thor dev kit – stop by our booth to see what it could do for you.

Deploying AI with NVIDIA Nemotron inference snaps on NVIDIA DGX Spark

Deploying large language models frequently introduces dependency conflicts, environment drift, and version management complexity. Canonical addresses this challenge through inference snaps – immutable, containerized packages that bundle optimized AI models with all required runtimes and libraries; optimized for the target silicon.

Following a recent

Stop by our booth to see inference snaps running on NVIDIA DGX Spark, and witness first-hand how you can harness powerful AI computing, literally at your desk.

Discover Canonical's latest hardware enablements

We'll be unveiling support for the NVIDIA DGX Station GB300 , NVIDIA RTX PRO 4500 Blackwell Workstation Edition , and native NVIDIA DOCA-OFED support, Ubuntu is ready for the next wave of accelerated computing. Stop by our booth to chat about how these integrations can help stabilize your organization's AI stack. Here's a quick breakdown of what's new.

DGX Station GB300: Ubuntu with NVIDIA AI Developer Tools

Ubuntu is being optimized as a fully validated OS for the newly announced DGX Station GB300. Think of it as a "data center at your desk", with 775GB of coherent memory and 20 PFLOPS of performance, it's built for heavy-duty local development.

This enablement ensures your team can prototype locally on the Blackwell architecture and scale seamlessly to the cloud with zero configuration drift. To find out more, come and find us at our booth – we're excited to talk to you.

Ubuntu is ready for NVIDIA RTX PRO 4500 Blackwell Server Edition

We are also enabling official Ubuntu support for the new NVIDIA RTX PRO 4500 Blackwell Server Edition . This single-slot, power-efficient universal GPU is a game-changer for high-density professional environments from data center to the edge. Our focus is on stable driver integration and predictable support lifecycles, which can help you deploy NVIDIA Blackwell -based performance across your on-prem and edge infrastructure.

Upcoming DOCA-OFED integration

We're working to bring the NVIDIA DOCA-OFED networking driver directly into Ubuntu's native repositories. This integration is designed for high-throughput AI pipelines where RDMA and GPUDirect are essential for minimizing latency.

By moving this into the native OS layer, we're aiming to eliminate manual driver management and the "kernel drift" that often breaks high-performance clusters. Come talk to us about how this native support can simplify your large-scale training environments.

About Canonical

Canonical, the publisher of Ubuntu, provides open source security, support, and services. Our portfolio covers critical systems, from the smallest devices to the largest clouds, from the kernel to containers, from databases to AI. With customers that include top tech brands, emerging startups, governments and home users, Canonical delivers trusted open source for everyone.

Learn more at https://canonical.com/

Learn more

Visit us at GTC booth #3017 in the exhibitor hall.

Find out more about Canonical's collaboration with NVIDIA.

Previewing at the event: NVIDIA CUDA support in Ubuntu 26.04 LTS, NVIDIA Vera Rubin NVL72 architecture support in Ubuntu 26.04 LTS, Canonical's official Ubuntu image for NVIDIA Jetson Thor, upcoming support for NVIDIA DGX Station and NVIDIA DOCA-OFED, and NVIDIA RTX PRO 4500 support. NVIDIA GTC 2026 is here, bringing together the technologies defining the [...]

Categories: AI/ML, gtc, nvidia, Ubuntu

Source: https://ubuntu.com//blog/nvidia-gtc-2026 Mar 16, 2026, 10:30 PM

Previewing at the event: NVIDIA CUDA support in Ubuntu 26.04 LTS, NVIDIA Vera Rubin NVL72 architecture support in Ubuntu 26.04 LTS, Canonical's official Ubuntu image for NVIDIA Jetson Thor, upcoming support for NVIDIA DGX Station and NVIDIA DOCA-OFED, and NVIDIA RTX PRO 4500 support.

NVIDIA GTC 2026 is here, bringing together the technologies defining the next wave of AI innovation. Developers, researchers, and business leaders are converging to explore physical AI, AI factories, agentic AI, and high-performance inference. Across these rapidly evolving domains, one shared requirement remains constant: a secure, performant, and fully supported operating system that integrates seamlessly with NVIDIA AI infrastructure.

With Ubuntu 26.04 LTS, Canonical is continuing its collaboration with NVIDIA by directly distributing NVIDIA CUDA in Ubuntu, and by delivering day-one platform readiness for NVIDIA Vera Rubin NVL72 rack-scale architecture. Together, these advancements provide developers and enterprises with a stable, enterprise-grade foundation for building, scaling, and operating AI workloads across edge, workstation, and data center environments.

Sneak peek: what you can expect at the Canonical booth this year

Join the Canonical team at Booth #3017 to see how we're bridging the gap between AI experimentation and production. Our engineers will be on-site at the San Jose McEnery Convention Center demonstrating full-stack open source solutions, from optimized deployment workflows to real-world architectures.

Let's dive into what you can expect.

CUDA support in Ubuntu 26.04 LTS

Canonical will directly distribute NVIDIA CUDA within Ubuntu 26.04 LTS, simplifying how developers access and maintain GPU-accelerated computing environments. By integrating CUDA into the Ubuntu archive, Canonical will reduce dependency fragmentation and eliminate the need for manual toolkit management across heterogeneous fleets.

For AI engineers and data scientists, this means CUDA libraries, drivers, and associated components will align with Ubuntu's lifecycle enterprise support model. This integration strengthens Ubuntu as a trusted base layer for high-performance AI, HPC workloads, and accelerated data processing pipelines. Distributing CUDA within Ubuntu 26.04 LTS, ensures that developers can build once and deploy consistently, from local development environments to large-scale production clusters.

Vera Rubin NVL72 architecture support in Ubuntu 26.04 LTS

Ubuntu 26.04 LTS, which releases in April 2026, introduces platform readiness for NVIDIA's Vera Rubin NVL72 architecture, enabling early adoption of next-generation accelerated computing systems. Canonical is working closely with NVIDIA to ensure kernel, driver, and user-space integration are validated for enterprise deployment on Vera Rubin NVL72-based platforms.

This support provides a stable operating system foundation for customers adopting new GPU and system architectures as they emerge. By delivering coordinated enablement across drivers, firmware, and core system components, Ubuntu reduces configuration risk and accelerates time to production.

For organizations planning transitions to next-generation AI infrastructure, Ubuntu's Vera Rubin NVL72 readiness ensures continuity across development, testing, and deployment environments. Enterprises can adopt new NVIDIA hardware with confidence that long-term support is integrated into the platform.

Official Ubuntu 24.04 LTS image for NVIDIA Jetson Thor

Canonical will be on-hand to show you the official Ubuntu 24.04 LTS image for NVIDIA Jetson Thor . This certified Ubuntu image, developed in collaboration with NVIDIA, provides a stable, supported, and compliant operating system layer for production environments – including in robotics, physical AI, and real-time reasoning.

For users of the NVIDIA JetPack SDK and its integrated AI stack, the Ubuntu image for Jetson Thor completes the circle and ensures that they can move at pace to deployment, on a trusted, enterprise-grade foundation. Through Ubuntu Pro, Canonical's comprehensive subscription for open source security, developers benefit from up to 15 years of patching and maintenance for Ubuntu, as well as compliance tooling for frameworks including FIPS and DISA-STIG.

We'll have an Ubuntu image running on a NVIDIA Jetson Thor dev kit – stop by our booth to see what it could do for you.

Deploying AI with NVIDIA Nemotron inference snaps on NVIDIA DGX Spark

Deploying large language models frequently introduces dependency conflicts, environment drift, and version management complexity. Canonical addresses this challenge through inference snaps – immutable, containerized packages that bundle optimized AI models with all required runtimes and libraries; optimized for the target silicon.

Following a recent

, Canonical demonstrated inference snaps on NVIDIA DGX Spark , where a single installation command delivered silicon-optimized models to any Ubuntu system. Building on this momentum, Canonical is collaborating with NVIDIA on packaging and distributing the NVIDIA Nemotron-3 family of open models. Inference snaps provide reproducibility, simplified deployment, and hardware-level optimization across Ubuntu devices, enabling consistent AI execution from local machines to enterprise clusters.

Stop by our booth to see inference snaps running on NVIDIA DGX Spark, and witness first-hand how you can harness powerful AI computing, literally at your desk.

Discover Canonical's latest hardware enablements

We'll be unveiling support for the NVIDIA DGX Station GB300 , NVIDIA RTX PRO 4500 Blackwell Workstation Edition , and native NVIDIA DOCA-OFED support, Ubuntu is ready for the next wave of accelerated computing. Stop by our booth to chat about how these integrations can help stabilize your organization's AI stack. Here's a quick breakdown of what's new.

DGX Station GB300: Ubuntu with NVIDIA AI Developer Tools

Ubuntu is being optimized as a fully validated OS for the newly announced DGX Station GB300. Think of it as a "data center at your desk", with 775GB of coherent memory and 20 PFLOPS of performance, it's built for heavy-duty local development.

This enablement ensures your team can prototype locally on the Blackwell architecture and scale seamlessly to the cloud with zero configuration drift. To find out more, come and find us at our booth – we're excited to talk to you.

Ubuntu is ready for NVIDIA RTX PRO 4500 Blackwell Server Edition

We are also enabling official Ubuntu support for the new NVIDIA RTX PRO 4500 Blackwell Server Edition . This single-slot, power-efficient universal GPU is a game-changer for high-density professional environments from data center to the edge. Our focus is on stable driver integration and predictable support lifecycles, which can help you deploy NVIDIA Blackwell -based performance across your on-prem and edge infrastructure.

Upcoming DOCA-OFED integration

We're working to bring the NVIDIA DOCA-OFED networking driver directly into Ubuntu's native repositories. This integration is designed for high-throughput AI pipelines where RDMA and GPUDirect are essential for minimizing latency.

By moving this into the native OS layer, we're aiming to eliminate manual driver management and the "kernel drift" that often breaks high-performance clusters. Come talk to us about how this native support can simplify your large-scale training environments.

About Canonical

Canonical, the publisher of Ubuntu, provides open source security, support, and services. Our portfolio covers critical systems, from the smallest devices to the largest clouds, from the kernel to containers, from databases to AI. With customers that include top tech brands, emerging startups, governments and home users, Canonical delivers trusted open source for everyone.

Learn more at https://canonical.com/

Learn more

Visit us at GTC booth #3017 in the exhibitor hall.

Find out more about Canonical's collaboration with NVIDIA.

Previewing at the event: NVIDIA CUDA support in Ubuntu 26.04 LTS, NVIDIA Vera Rubin NVL72 architecture support in Ubuntu 26.04 LTS, Canonical's official Ubuntu image for NVIDIA Jetson Thor, upcoming support for NVIDIA DGX Station and NVIDIA DOCA-OFED, and NVIDIA RTX PRO 4500 support. NVIDIA GTC 2026 is here, bringing together the technologies defining the [...]

Categories: AI/ML, gtc, nvidia, Ubuntu

Source: https://ubuntu.com//blog/nvidia-gtc-2026 Mar 16, 2026, 10:30 PM